Black Forest Labs has expanded its FLUX.2 lineup with the release of two new variants:

https://huggingface.co/black-forest-labs/FLUX.2-klein-9b-kv

https://huggingface.co/black-forest-labs/FLUX.2-klein-9b-kv-fp8

If you’re thinking about purchasing a new GPU, we’d greatly appreciate it if you used our Amazon Associate links. The price you pay will be exactly the same, but Amazon provides us with a small commission for each purchase. It’s a simple way to support our site and helps us keep creating useful content for you. Recommended GPUs: RTX 5090, RTX 5080, and RTX 5070. #ad

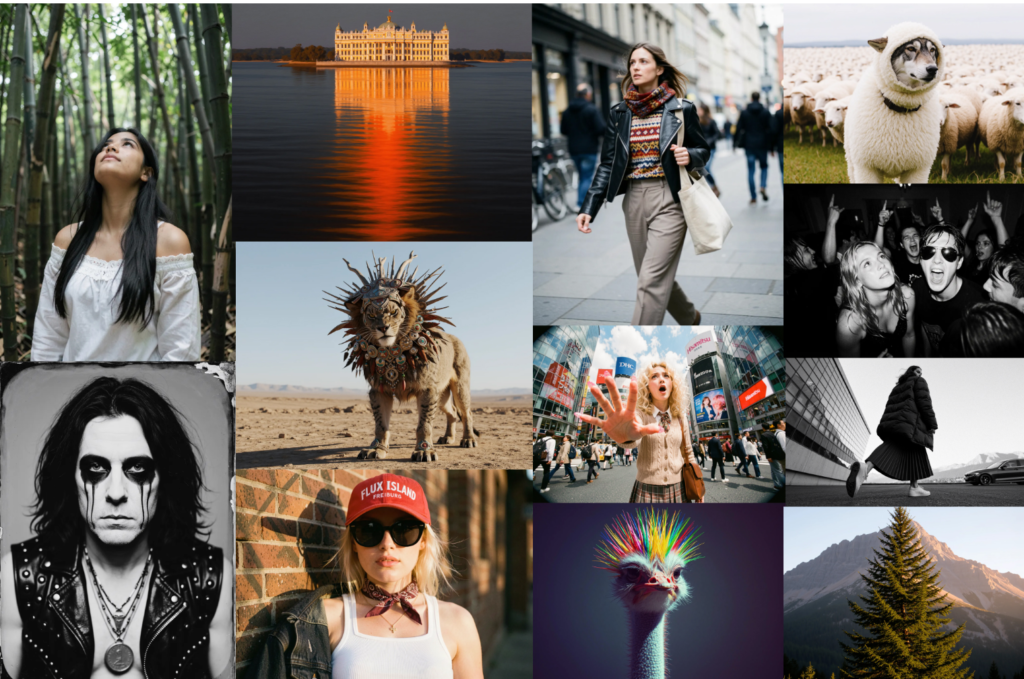

These models focus on improving real-time image generation and multi-image editing, continuing the trend toward faster and more interactive AI workflows.

FLUX.2 Klein is designed as a compact yet powerful model family that combines text-to-image generation with image-to-image editing. The goal is to enable creators to iterate quickly, making adjustments and refinements in near real time without sacrificing visual quality.

One of the key features introduced in these models is key-value (KV) caching. This technique allows the model to store intermediate representations from reference images and reuse them during generation, instead of recomputing them repeatedly. As a result, workflows that involve multiple reference images can see significant speed improvements, making the models well suited for iterative creative tasks.

The standard FLUX.2 Klein 9B-KV model represents the full-precision version and is intended for users with high-end hardware. Running this variant typically requires a large amount of VRAM, placing it in the range of top-tier consumer GPUs or workstation-class setups.

The FP8 variant, FLUX.2 Klein 9B-KV FP8, is optimized for efficiency. By using FP8 quantization, it reduces memory usage and improves inference speed while maintaining strong output quality. This makes it more accessible for users with limited GPU resources compared to the standard version. In addition, with GGUF model formats, further optimized versions could run on an even wider range of consumer-grade GPUs.

These releases highlight a broader shift in generative AI toward low-latency, interactive systems. Rather than focusing purely on maximum quality, newer models aim to balance speed, efficiency, and usability. This is particularly important for creators working in workflows that require constant iteration, such as compositing, character consistency, and scene refinement.

While both models are powerful, they are currently distributed under a non-commercial license, limiting their use to research and experimentation.

FLUX.2 Klein 9B-KV and its FP8 counterpart demonstrate how architectural optimizations like KV caching and quantization can significantly improve real-world usability. As AI image generation continues to evolve, models like these are helping bridge the gap between high-quality output and real-time performance.

Resources

Further Reading

How to Use FLUX.2-dev Turbo LoRA in ComfyUI with GGUF Models

Leave a Reply