A newly released open-source project, daVinci-MagiHuman, is gaining attention for its ability to generate highly realistic human videos with synchronized audio—while maintaining impressive speed and efficiency.

If you’re thinking about purchasing a new GPU, we’d greatly appreciate it if you used our Amazon Associate links. The price you pay will be exactly the same, but Amazon provides us with a small commission for each purchase. It’s a simple way to support our site and helps us keep creating useful content for you. Recommended GPUs: RTX 5090, RTX 5080, and RTX 5070. #ad

Developed by GAIR in collaboration with Sand.ai, the model introduces a simplified yet powerful approach to audio-video generation that could reshape how creators and developers work with AI-generated content.

A Single-Stream Approach to Audio-Video Generation

At the core of daVinci-MagiHuman is a single-stream Transformer architecture, designed to process text, video, and audio together in one unified sequence. Unlike traditional systems that rely on multiple modules or cross-attention pipelines, this model uses a streamlined structure based entirely on self-attention.

This design reduces system complexity while improving training and inference efficiency. The model takes text prompts, a reference image, and noisy audio/video inputs, then jointly refines them into a synchronized output.

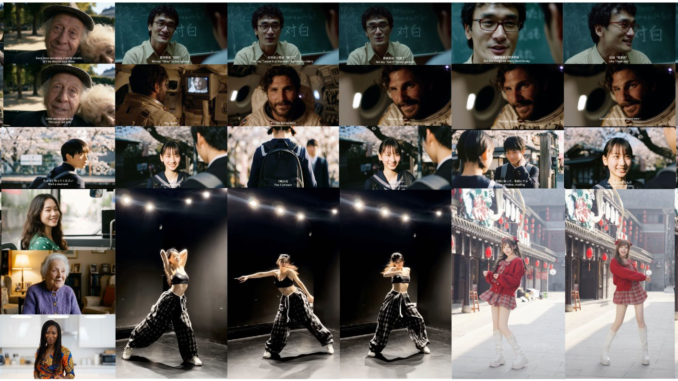

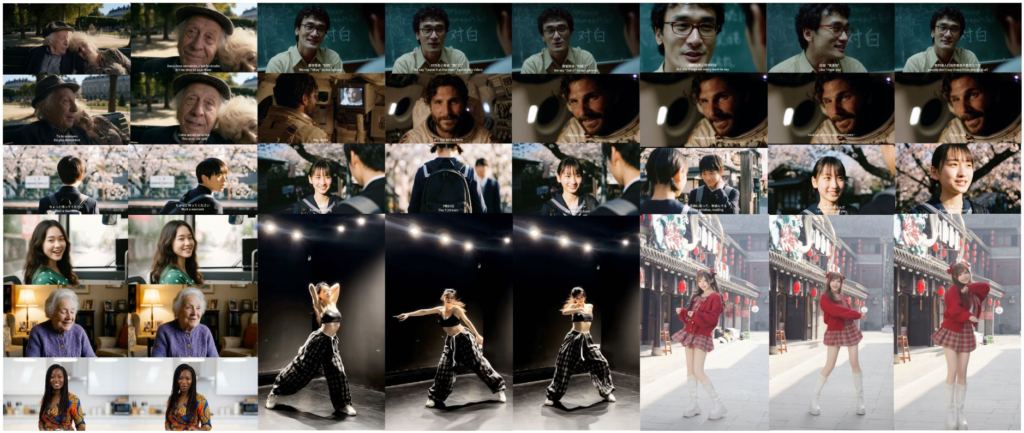

Focused on Human Realism

One of the standout features of daVinci-MagiHuman is its emphasis on human-centric generation. The model is optimized to produce:

-

Expressive facial movements

-

Natural coordination between speech and expressions

-

Realistic body motion

-

Accurate audio-video synchronization

These capabilities make it particularly suitable for applications like virtual influencers, digital avatars, and AI-generated talking characters.

Speed and Efficiency Gains

Despite its large scale—featuring a 15B-parameter Transformer with 40 layers—the model is designed for fast inference.

According to the project details:

-

A 5-second 256p video can be generated in about 2 seconds

-

A 1080p video takes around 38 seconds on a single H100 GPU

This performance is achieved through several engineering optimizations, including latent-space super-resolution, model distillation, and a lightweight VAE decoder.

Strong Benchmark Performance

In both automated and human evaluations, daVinci-MagiHuman shows competitive results against other leading models.

-

Achieves strong visual quality and text alignment scores

-

Demonstrates higher preference rates in human evaluations compared to similar systems

-

Delivers lower word error rates for improved speech clarity

These results suggest the model is not only efficient but also capable of producing high-quality outputs.

Multilingual and Open Source

The model supports multiple languages, including English, Chinese, Japanese, Korean, German, and French, making it suitable for global content generation.

Importantly, the entire stack is open source, including:

-

Base model

-

Distilled model

-

Super-resolution model

-

Inference code

This open approach allows developers and researchers to experiment, customize, and build on top of the system.

Why It Matters

The release of daVinci-MagiHuman highlights a growing trend in AI: simplifying architecture while improving performance.

By removing the complexity of multi-stream designs and focusing on a unified model, this project demonstrates that efficiency and quality don’t have to be trade-offs. For creators working with AI-generated video—especially character-driven content—this could significantly lower the barrier to producing high-quality results.

Conclusion

daVinci-MagiHuman represents a meaningful step forward in open-source generative AI. Its combination of speed, realism, and simplicity makes it a compelling option for developers, researchers, and content creators alike.

As AI video generation continues to evolve, models like this may play a key role in shaping the next generation of digital storytelling.

Leave a Reply