A few days ago, I tried to install a stand alone Stability Video Diffusion (SVD) streamlit app on Windows. The process is complicated and not very straight forward. Then, I found that installing SVD on ComfyUI is actually a lot easier. Please follow along to explore the new image to video function introduced by SVD.

If you’re thinking about purchasing a new GPU, we’d greatly appreciate it if you used our Amazon Associate links. The price you pay will be exactly the same, but Amazon provides us with a small commission for each purchase. It’s a simple way to support our site and helps us keep creating useful content for you. Recommended GPUs: RTX 5090, RTX 5080, and RTX 5070. #ad

Requirement

- A working ComfyUI installation – https://github.com/comfyanonymous/ComfyUI

- ComfyUI Manager – https://github.com/ltdrdata/ComfyUI-Manager

- Nvidia GPU with 10GB VRAM or higher – 4070, 4080, 4090

Installation

- Start ComfyUI.

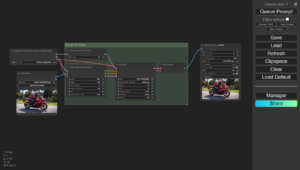

- Drag and drop this image to the ComfyUI canvas.

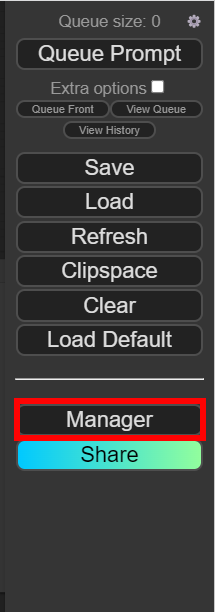

- Click on Manager on the ComfyUI windows.

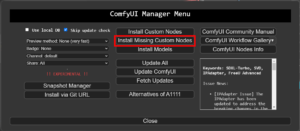

- Click on Install Models on the ComfyUI Manager Menu.

- Search for svd and click on Install for the Stable Video Diffusion Image-to-Video and Stable Video Diffusion Image-to-Video (XT). The first one is used to generate 14 frames of video and the second one is for 25 frames of video. If you don’t see this option, please click on Update All on the ComfyUI Manager Menu. Wait for the installation to be done and close the window.

- Click on Install Missing Custom Nodes and install any missing nodes. Close the window when the installation is done.

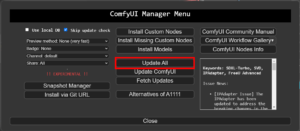

- Click on Update All to update ComfyUI and the nodes.

- Close ComfyUI and restart it

Example

- Start ComfyUI

- If the SVD workflow is not loaded. Drag and drop the first image of this article to the ComfyUI canvas again.

-

Drag and drop an image which the width is 576 and length is 1024 to the Load Image node.

- Adjust the motion_bucket_id. I used 200 in this example to have a lot of motion in the final video. For more static video, use a lower value (<64) . Click on Queue Prompt to generate the video. The output is in the ComfyUI\output folder.

Sample Input

Sample Output

Notes

- The following resolutions work: 576 x 1024, 1024 x 576, 576 x 768, and 768 x 576.

- Input image does not have to be the exact size. If the aspect ratio matches, the image will be scaled. If the aspect ratio does not match, the image will be scaled and cropped.

- GPU memory usage is about 8 GB f0r using svd model to generate 14 frames. The usage is about 14GB for using svd-xt model to generate 25 frames.

- I found a nice reddit article comparing some of the parameters.

Reference

- The workflow is modified from the one on this page. I changed the SaveAnimatedWEBP node to Video Combine which is more flexible for the output format.

Leave a Reply